Search results for tag #noai

From Global News:

"OpenAI violated Canadians’ privacy, watchdogs say in call for legal reform."

"The report criticizes OpenAI for rushing its ChatGPT products to market before having proper privacy safeguards in place, only addressing concerns after the fact, which violated accountability requirements in the federal Personal Information Protection and Electronic Documents Act (PIPEDA)."

"Michael Harvey suggested that ChatGPT by design 'cannot be compliant' with the province’s privacy law as currently written, which forbids companies from relying on 'implied consent' if it is not collecting the data itself, but rather from third-party websites."

New privacy report from the Canadian govt on OpenAI:

- OpenAI failed to get consent for scraped data.

- ChatGPT fails accuracy requirements.

- OpenAI fails requirements for personal data deletion.

Author's notes for the short poem (cinquain) by me that appears immediately upthread of this message.

I think this is my first attempt at the cinquain form. I think I did not get the meter right. It's harder than it looks to do that right. But I have limited time today, so this will have to do for a first pass.

I should say that I had a discussion on duck.ai with GPT-5 mini about this poem as I wrote various drafts of what is attached above. I let it do critiques and give me some info on the general form requirements, but I refused to let it do any of the actual writing. I feel very strongly that I want to do my own writing and not have that outsourced to another entity.

I chose to use duck.ai because it promises some degree of privacy. Not that this was a super-private project. But I think even when getting advice, it's useful to think about this question.

However, "we" did usefully discuss word choice and it's pretty good at being able to assess whether the sense of a particular word choice lands in the way I intend, so that was helpful in working through some changes I was contemplating.

#Writing #Poetry #WritingCommunity #AI #NoAI #privacy #DuckAI #LLMs

#Python #cryptography library (yes, the one that criticizes everything and everyone) is now vibecoded. Our future is truly bright!

Noticed because apparently "Claude" wrote a test that OOM-ed my system. But hey, #RustLang protects against memory errors, so it's fine to vibecode your security critical components.

Well, look here.

So, the word technofascism is being used more often these days, ever since Palantir published a literally fascist manifesto.

All the tech billionaires at Trump's second inauguration are a direct sign of technofascism happening.

Gen AI is inherently fascist, and I'm not the first to say it. Surveillance, trying to get people to delegate their thinking to the bot controlled by fascists, eliminating human ingenuity and creativity...

Thank you for having me on, @davidgerard

https://youtu.be/KheeSCTysTo?si=Q2bCT7IZZNY4qHOP

Here's where you can Late Pledge approximately $10 for an eBook now before it's published in June. I need it for rent money. Thank you:

https://www.kickstarter.com/projects/kimcrawley/technofascism-survival-guide/

From the BBC:

"Musk's AI told me people were coming to kill me. I grabbed a hammer and prepared for war."

"Adam is one of 14 people the BBC has spoken to who have experienced delusions after using AI. They are men and women from their 20s to 50s from six different countries, using a wide range of AI models."

Removing AI from schools is still best for students

While we welcome governments in Manitoba and BC taking seriously the harm AI can do to children. We argue that age verification is still not the best solution for creating an environment where students can thrive.

https://www.aicaution.ca/news/removing-ai-from-schools-is-still-best-for-students/

Does anybody else use #Netlify user agent blocker extension? A month ago my site built fine. Now rebuilding it fails because the extension won't build. #buildFails #noAI

In the era of #LLM psychosis, it's important to emphasize that it is fine to talk to yourself.

Your own brain is entirely capable of being a sounding board. It can provide a second and a third opinion. It can look at things from another person's perspective. It can simulate complete complex interactions. And it can do all that in the privacy of your own head, with no extra energy cost. And it can give you a deeper understanding of yourself.

You don't need chatbots for that. You don't need to lean on their nazi owners. You don't need to pay for them, you don't need to share the intimate details of your life, you don't need to burn the planet in the process. You won't get hurt accidentally, you won't get abused or blackmailed. And your brain won't leave you helpless when someone suddenly decides helping you isn't profitable.

A bit of nature to calm the soul. #Earthday is April 22 - wherever you might be, take a moment to appreciate the beauty of nature.

Earth Smiles in Flowers -> https://chrystyne-novack.pixels.com/featured/earth-smiles-in-flowers-chrystyne-novack.html

#Wildflowers #flowers #nature #AYearforArt #bloomscrolling #buyintoart #mastoart #art #outdoors #homedecor #flower #creativetoots #color #naturePhotography #fotografie #smallbusiness #noAI

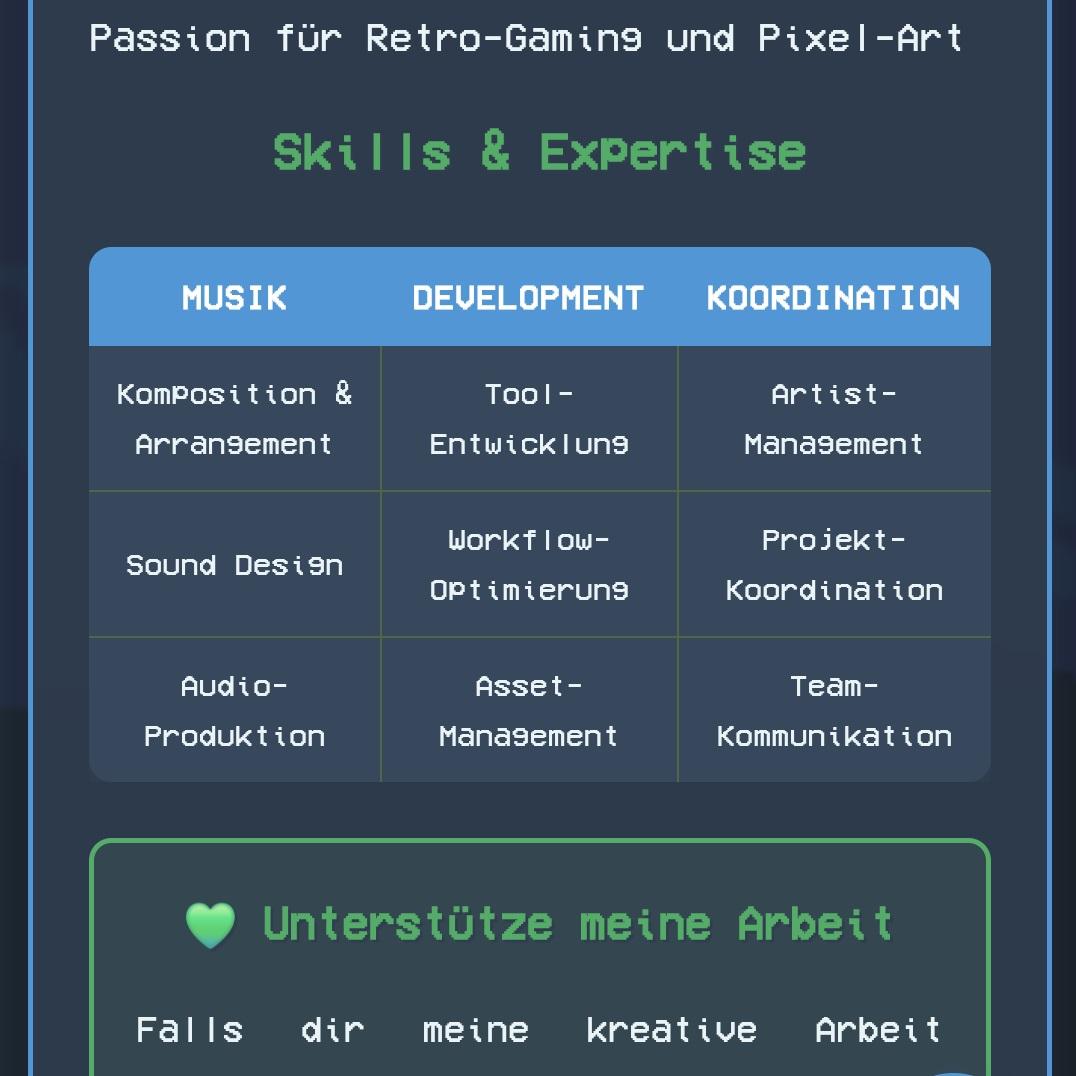

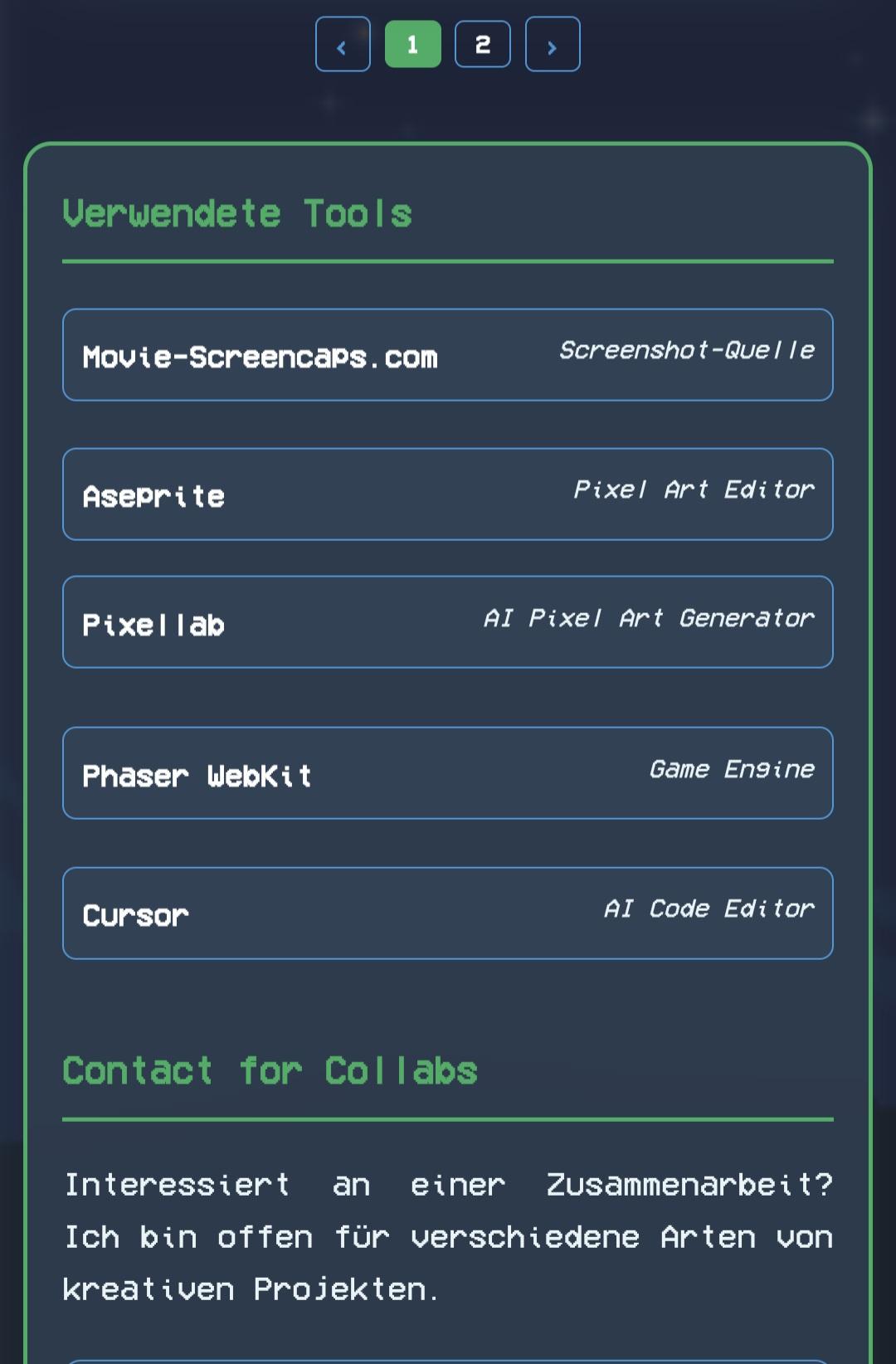

It's a tremendous honour to shine a spotlight on cool Gen AI resisters doing cool stuff.

Like Salma Alam-Naylor, David Gerard, Gerry McGovern, Olivia Guest, tante, Anthony Moser, lots of other great badasses.

But today's blog written about me is more about... me.

"I was a lazy ten year old for needing a calculator, today’s professors can't write one sentence."

Tell me, why was I such a bad ten year old in the 90s for being unable to do long division without a calculator, but today's professors cannot write a single sentence email without causing a bit of environmental destruction?

Is that double standard fair?

Absolutely not, and I'm furious.

In my blog, I use the "Ginger Rogers did everything that Fred Astaire did, but backwards and in high heels" as a metaphor for how I became a professor.

I briefly mention a new study by some excellent researchers in Turkey. And the great work of Dr. Olivia Guest on Gen AI's destructive impact to academia as people are delighted to let their brains atrophy.

I applaud Anthony Moser and David Gerard, because they also deserve it.

I talk about how, while Stop Gen AI has lots of great comrades, most of the rest of the world doesn't give a fuck about us and we need your financial support for survival.

I mention how I quit being a professor because I refuse to subject my students to the Gen AI torment nexus. And how the world has punished me accordingly.

And also how I punish myself.

I hope you feel my rage.

The bright #LLM future, next part.

git.gentoo.org is now effectively dead, being DDoS-ed by almost a million different IPs every day. Most of them are just performing a single request at a totally random URL. How are people supposed to deal with that? How can we distinguish a legitimate user who hit some URL from a scraper that distributes its operations over thousands of IP addresses?

If you use LLM crap, you're part of the problem. You support these bastards. You should be ashamed of yourself.

Gentoo, FLOSS, LLMs, depressing [SENSITIVE CONTENT]

#Gentoo is still one of the bright outposts in #FLOSS where human work is valued and #LLM contributions are banned. However, sometimes I feel that this matters very little.

After all, Gentoo is a distribution. While it has its own value, it cannot exist without all the software it is shipping. It makes no sense in isolation.

And let's be honest, I don't think you can avoid slop today. We are trying our best to sieve out the worst: the copywashing chardet, the vibecoded NIH Perl crypto packages… but it's just that.

As someone who bumps Python packages, let me tell you this: LLMs are omnipresent. I notice Claude in commit logs, I notice the blasphemy of agent instructions all over the place… and there's probably much more than I don't notice. With many core components giving in, you can't avoid it without literally freezing on old, vulnerable versions, or spending hours looking for alternatives or creating them.

FLOSS is dead. People don't care. They don't have conscience. All they care about is the sick idea of "productivity", i.e. generating more slop.

The few of us who do care can do very little. We will continue doing our best until they kill us (as they're literally slowly killing the whole humankind). But that's it. Maybe it will pass once the bubble pops, maybe it won't. Either way, the damage is beyond repair. We will never be able to trust one another like we did. We will never again be a community building a better world.

It's just like everything nowadays. It's hard to find a good washing machine (one that will actually be repairable), good shoes (that won't fall apart shortly after the warranty expires), good food. You need lots of money, and even then you have to sieve through all the scammers who just sell the same shit with higher profit margin. #OpenSource is just another branch of business where people are trying to "sell" you shit, and don't care anymore if it explodes in your face. They don't even care if they're actually making a profit.

When I find a new band that I really like, I have to look them up to see if they are using AI to create the music. While listening to music tonight, I found 4 bands that are using AI to "create" their "music". Bloody fucking hell. I'm really over this shit.

AI is fucking ruining everything.

RE: https://infosec.exchange/@david_chisnall/116379211678912109

This is one of the best descriptions of AI that I have read so far:

"It isn’t a technology, it’s a branding term, and it’s a branding term used almost exclusively for things that have no social benefit."

Pseudo Nym boosted

David Chisnall (*Now with 50% more sarcasm!*) » 🌐

@david_chisnall@infosec.exchangeI don’t know if this account is actually monitored, or just a publishing place, but you may have noticed that this post has received almost overwhelmingly negative responses.

You could disregard this as Mastodon bias, but keep in mind that the biggest bias on Mastodon is that people who understand and built core parts of the information technology that you use every day are massively over represented. This is probably the only place you will get a lot of replies from people who both understand technology and do not have a financial incentive to hype things to get large amounts of government funding.

EDIT: I should add, I used machine learning during my PhD and there are a lot of problems for which it is a really good fit. But, in the current climate, it’s generally safe to interpret ‘AI’ as meaning ‘machine learning applied to a problem where machine learning is the wrong solution’. It isn’t a technology, it’s a branding term, and it’s a branding term used almost exclusively for things that have no social benefit.

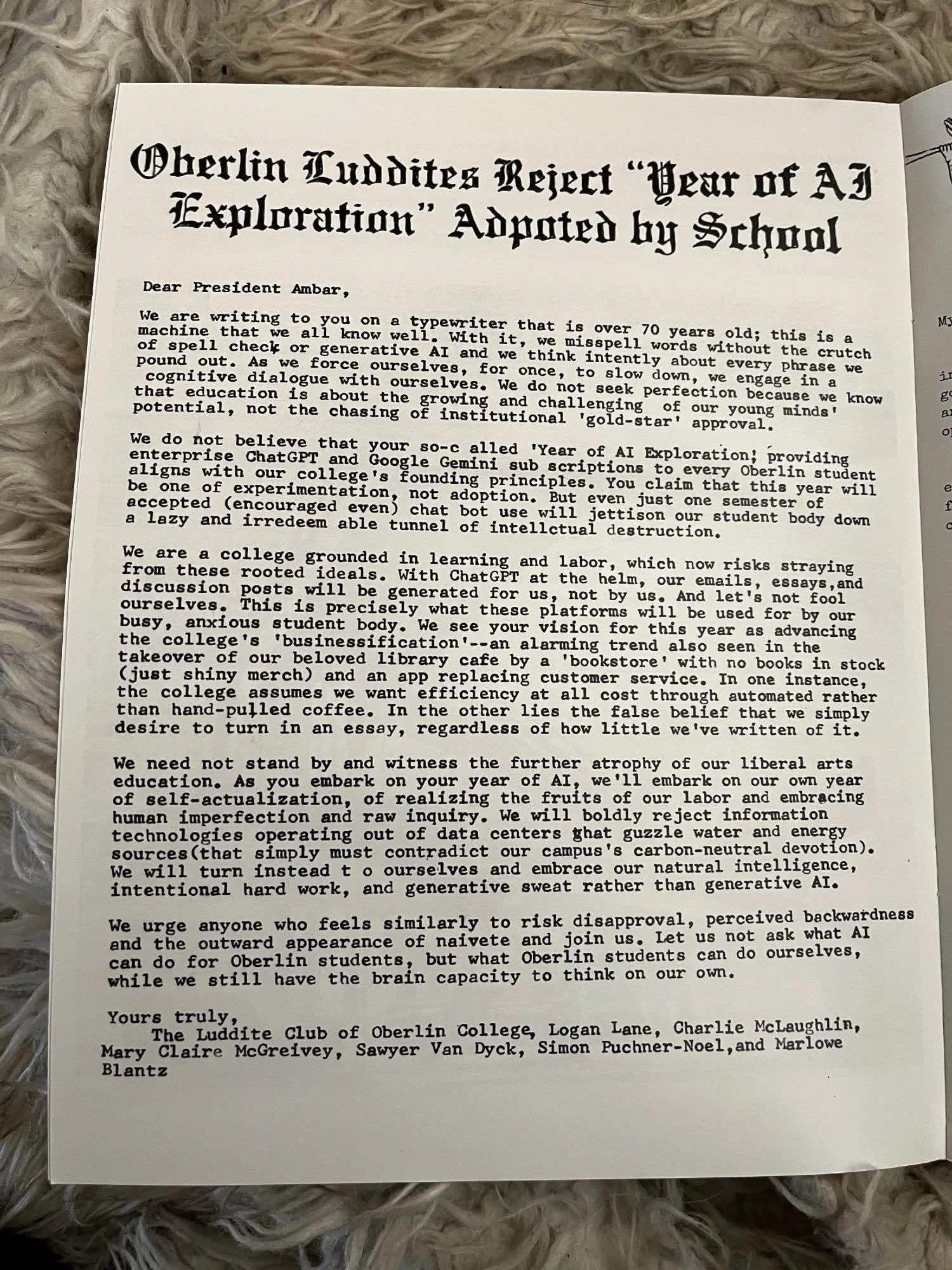

Oberlin #Luddites Reject "Year of #AI Exploration" Adopted by School

September 19, 2025

To President Carmen Twillie Ambar and Oberlin Community:

We are drafting this letter to you on a typewriter that is over 70 years old; this is a machine that we know well. With it, we ditch the crutches of spell-check and generative AI, and we think intently about every phrase we pound out. As we force ourselves, for once, to slow down, we engage in an inner dialogue.

Most of us did not enroll at Oberlin in search of superficial perfection, nor of lazy convenience. Rather, we chose it for its quirky individualism and a tangible education — the challenging of our young minds’ potential, not the chasing of institutional “gold-star” approval.

This college, which was built on a legacy of learning and labor, now risks straying from these principles. With ChatGPT at the helm, our emails, essays, and discussion posts will be generated for us. Not by us. And let’s not fool ourselves. This is precisely what these platforms will be used for.

You claim that this year will be one of “experimentation,” not adoption. But even one semester of accepted (even encouraged) chat-bot use will jettison our student body down a lazy, irredeemable tunnel of intellectual destruction.

We see this fetish for efficiency in other ways at this college: in the takeover of our beloved library cafe by a “bookstore” with no books in stock and an app replacing customer service — through automated instead of hand-pulled coffee.

Who gains from this if not the students? Sam Altman of ChatGPT and Sundar Pichai of Gemini may be plying their wares to colleges at low or no cost, but it’s no secret that every engagement with these platforms is an effort in surveillance in which our data is extracted and monetized. President Ambar, as you embark on your year of AI, we’ll embark on our own year of self-actualization — of realizing the fruits of our labor, and of embracing human imperfection and raw inquiry. We reject information technologies operating out of data centers that guzzle water and precious energy sources (and that contradict our campus’ carbon-neutral policy). We will not stand by and witness the further atrophying of our liberal arts education. Rather than strengthening Silicon Valley, we build our own skills and generative sweat. We urge all members of the Oberlin community who feel similarly to join us and sign our “AI-Opt-Out Letter.”

As for you, President Ambar, we ask that you terminate the College’s contract with Google Gemini and OpenAI. Our position may risk disapproval, perceived backwardness, and the outward appearance of naiveté. But let us not ask what the Silicon Valley oligarchs can do for Oberlin students, but what Oberlin students can do for ourselves while we still have the brain capacity to do so.

–The Oberlin Luddite Club

Charlie Mclaughlin, Logan Lane, Mary Claire McGreivey, Simon Puchner-Noel, Marlowe Blantz, and Sawyer Van Dyck

Yesterday, I've read a vibe coded script for the first time in my life, and I've cried.

It wasn't ugly. "Ugly" is not the right term. It was as if someone wasn't able to comprehend beauty, but badly tried to mimic it. It felt like "malicious compliance" to beauty. The kind of awful verbose pedantry that feels wrong every step of the way.

It's the kind of code you'd expect in a corporate environment when you know that the code would be read by the top suits who have no idea about coding, but judge it by the volume and expect science fiction level of make-believe.

It's the kind of code is abstracted away into the tiniest details. Every function returns a complex dataclass explaining precisely what it did, for no reason at all. What would be two lines of code is a function. What would be a function is a whole module. It's a caricature of good programming practices.

I was supposed to add modifying a second field on the same object via GitHub API. I've guessed it would take me about an hour to figure out the code enough to be able to do that — what ought to be 2-3 extra lines. I suspected I'd discover that most of the code does precisely nothing. Just meaningless API exchanges that are absolutely unnecessary. It felt like the kind of parody of bureaucracy where you have to file 10 forms to do something, and only one of them actually means anything.

What used to be "do one thing well" became "doing ten totally random things is fine, as long as one of them happens to be what I need, and the whole thing doesn't blow anything up in an obvious way".

Perhaps it's just because this way a throwaway script. Maybe "production" stuff takes more, err, prompt refining? Maybe it actually can produce stuff that's comprehensible.

But if that code was any indicator, then I'm not going to believe that any big LLM contributions are actually reviewed by humans. A review will take more time than rewriting from scratch. This is a ticking time bomb. That LLM-generated code isn't introducing exploits right now is either a statistical accident, or it's just that nobody bothers.

Clarification: I didn't "prompt" it or request one. I'm not a hypocrite.

Wikipedia's new "no LLM" policy [SENSITIVE CONTENT]

Good news, as of 27 March. (I had missed this when it came out.)

Wikipedia content guidelines now prohibit the use of genAI tools, with two well-defined minor exceptions. An important step.

quote

"Text generated by large language models (LLMs) such as ChatGPT, Gemini, Claude, DeepSeek, or Grammarly often violates several of Wikipedia's core content policies. For this reason, the use of LLMs to generate or rewrite article content is prohibited, save for [...] two exceptions."

end-quote

The two listed exceptions are (i) basic copyediting support, under human review, and (ii) translation into English.

The new policy applies specifically to the English-language Wikipedia.

https://en.wikipedia.org/wiki/Wikipedia:Writing_articles_with_large_language_models

I'm working on a small "pitch video" where I explain potential co-conspirators the project. And so I decided to show them an example of the kind of audience shock you could do, by starting an action scene in the middle of the most tense stuff ever.

I don't have any money, so the car ( honda ) is #CGI done in #BLENDER3d ( #noai )

2 #vfx shots 2 full cgi and 1 vfx shot.

Edited also in blender.

With First-of-Its-Kind Bill, #BernieSanders and #AOC Propose #Moratorium on New #AI #DataCenters

“These massive facilities are sucking up precious #water resources, paving over #farmland, driving #ClimateChange, and disrupting the fabric of communities,” said one supporter of the new legislation.

by Jake Johnson, Mar 25, 2026

"Two of the leading progressives in the US Congress, Sen. Bernie Sanders and Rep. Alexandria Ocasio-Cortez, announced legislation on Wednesday that would impose a nationwide moratorium on the construction of new artificial intelligence data centers amid mounting concerns over their insatiable consumption of power and water resources, impacts on the climate, and other harms.

"Sanders’ (I-Vt.) office said in a press release announcing the #ArtificialIntelligence #DataCenterMoratorium Act that the construction pause would remain in effect 'until strong national safeguards are in place to protect workers, consumers, and communities, defend privacy and #CivilRights, and ensure these technologies do not harm our #environment.'

"Sanders and #OcasioCortez (D-NY) are set to formally introduce their legislation at a press conference on Wednesday at 4 pm ET.

"Food & Water Watch (#FWW), which last year became the first national organization in the US to call for a total moratorium on the approval of new AI data centers, celebrated the first-of-its-kind bill and called on other members of Congress to 'move quickly to sponsor, champion, and pass' it. FWW’s groundbreaking call for a national AI data center moratorium was later echoed by hundreds of advocacy organizations at the state and national levels.

" 'We need a halt to the explosive growth of new AI data center construction now, because political and community leaders across the country have been caught completely off guard by this aggressive, profit-hungry industry,' Mitch Jones, FWW’s managing director of policy and litigation, said in a statement Wednesday. 'It has yet to be determined if—not how—the industry can ever operate in a manner that sufficiently protects people and society from the profusion of inherent hazards and harms that data centers bring wherever they appear.' "

Read more:

https://www.commondreams.org/news/ai-data-center-moratorium

#AWS #NoDatacenters #NoAI #NoNukesForAI #UnrestrictedGrowth #WaterIsLife #NoisePollution #AISucks #USPol

RE: https://mastodon.world/@HamonWry/116285647635820786

"Read the books they don’t want you to. That’s where the good stuff is."

--LeVar Burton,

2022

#ReadBannedBooks

#BanTheFascistsNotTheBooks

#LeVarBurton

#ReadingRainbow

#GenX

#Xennial

#ILoveBooks

#books

#NoAI

An excellent article by Jean-Christophe Bélisle-Pipon on OpenAI's reaction to the Tumbler Ridge tragedy:

OpenAI’s safety pledges in the wake of Tumbler Ridge aren’t AI regulation — they’re surveillance

"True AI regulation asks whether a model might facilitate or amplify harmful ideation through its interaction patterns. It asks how the system is built, what it’s tested for and what obligations attach to its deployment.

The current arrangement asks none of these questions. Instead, it builds a pipeline from private AI interactions to law enforcement, administered by a corporation, governed by proprietary policy.

I call this the surveillance substitution: a governance vacuum gets filled not with democratic regulation, but with corporate surveillance of users. It is not regulation of AI. It is regulation of the people who use AI, conducted by the AI company itself, with the police as the endpoint."

I forgot how slow-reading #Aristotle is. You really have to take it a word at a time.

Kind of like reading code, which I guess isn't something that people want to do anymore???

"Heh heh, I poured your codebase into clod, and it told me you're dumb. Heh heh."

It's not vibe coding... well... it is vibe coding, but the vibe is Beavis and Butthead. 🤦♂️

The fact that browsing without AI seems like such an innovative thing these days, should make us all think twice.

Keep exploring!

https://www.xda-developers.com/tried-browser-that-doesnt-have-ai/

This is the fifth in the "Meta-sponsored series" of articles from the Linux Foundation.

I was not aware the Linux Foundation produced advertising copy for big tech, let alone the machine-learning "industry".

Dear Fedi friends,

I'd like to put together a list of people who are publicly resisting / calling out LLMs and AI slop.

Why? I enjoy reading my Fediverse feed in topical lists and I need something to counteract the unrelenting AI hype I see in the media.

Do you have any recommendations?

So far, at the top of my list I have:

@timnitGebru @emilymbender and @alexhanna of @DAIR

plus @cwebber @jaredwhite and @tante

Anyone else to recommend who advocates for #NoAI?

There, got the new game up at https://ddr0.ca/boloball/

Roll balls down a playfield. Beat your opponent.

#IndieGame #IndieGames #boloball #own #noai #FreeGame #indieweb

![[?]](https://polymaths.social/fileserver/01HCMX6M1CZNEVJ4F5H58TT5XJ/attachment/original/01JHC5KM5K0V0J0436SPD3F86H.jpeg)

🍵

🍵  [

[ 🔥

🔥![[?]](https://polymaths.social/fileserver/01SR16CG9J90FXA8BHW2D07V9A/attachment/original/01KDX72J0WPQFKXJTA355MZZDP.jpeg)

![[?]](https://aseachange.com/fileserver/01ENCW3D083ATDAP5WGJEMSJC8/attachment/original/01JG469Y8X2DXVNGTWEZ8BPAGX.jpeg)

![[?]](https://soc.octade.net/octade/s/3f7f2b5eda4e392867c301c49c2daa16.png)