Search results for tag #code

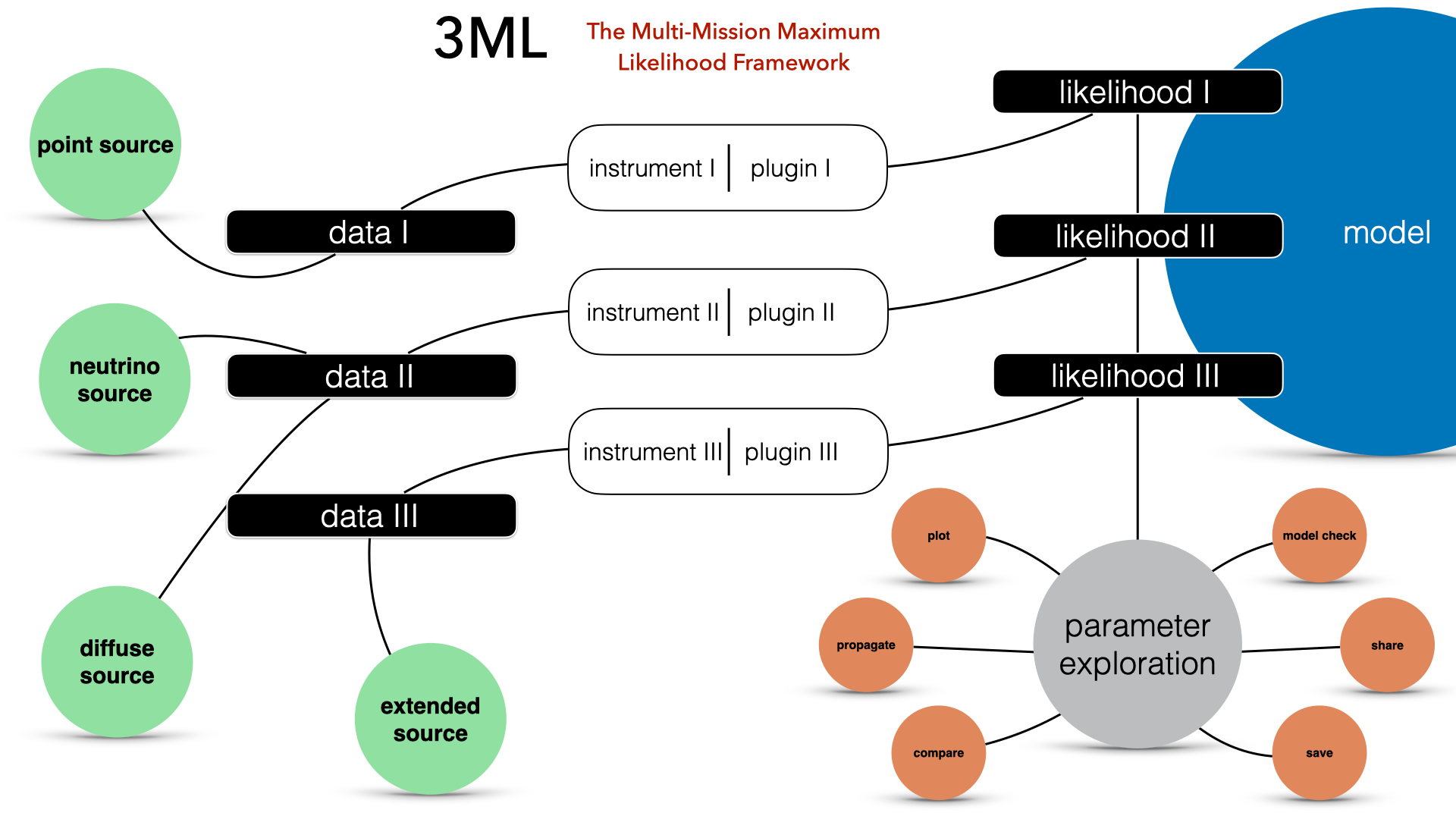

3ML: Framework for multi-wavelength/multi-messenger analysis

ThreeML is supported by National Science Foundation (NSF) https://www.nsf.gov/

FYI:

https://heasarc.gsfc.nasa.gov/xanadu/xspec/

https://ui.adsabs.harvard.edu/abs/2015arXiv150708343V/abstract

https://arxiv.org/pdf/1507.08343

#space #code #python #bsd3 #fermi #xspec #hawc #science #astronomy

From technic960183

spherimatch:

A Python package for cross-matching and self-matching in spherical coordinates.

spherimatch is a Python package for efficient cross-matching and self-matching of astronomical catalogs in spherical coordinates. Designed for use in astrophysics, where data is naturally distributed on the celestial sphere, the package enables fast matching with an algorithmic complexity of O(NlogN). It supports Friends-of-Friends (FoF) group identification and duplicate removal in spherical coordinates, and integrates easily with common data processing tools such as pandas.

https://github.com/technic960183/spherimatch

https://technic960183.github.io/spherimatch/tutorial/xmatch.html

https://technic960183.github.io/spherimatch/install.html

https://pypi.org/project/fofpy/

https://linuxtut.com/en/68a22081e848030bc963/

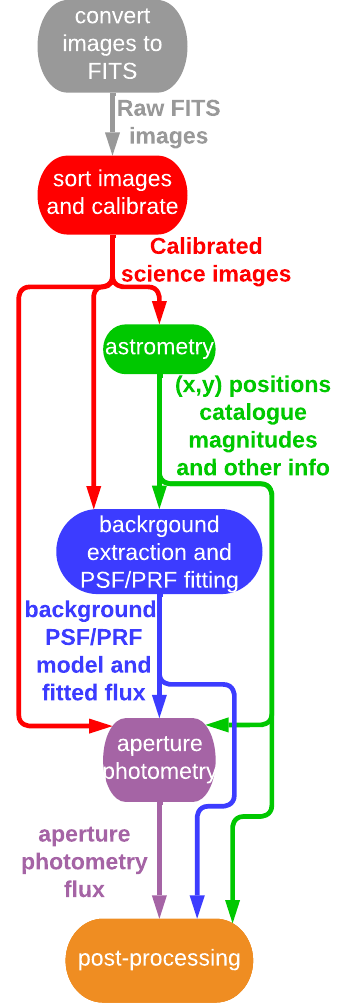

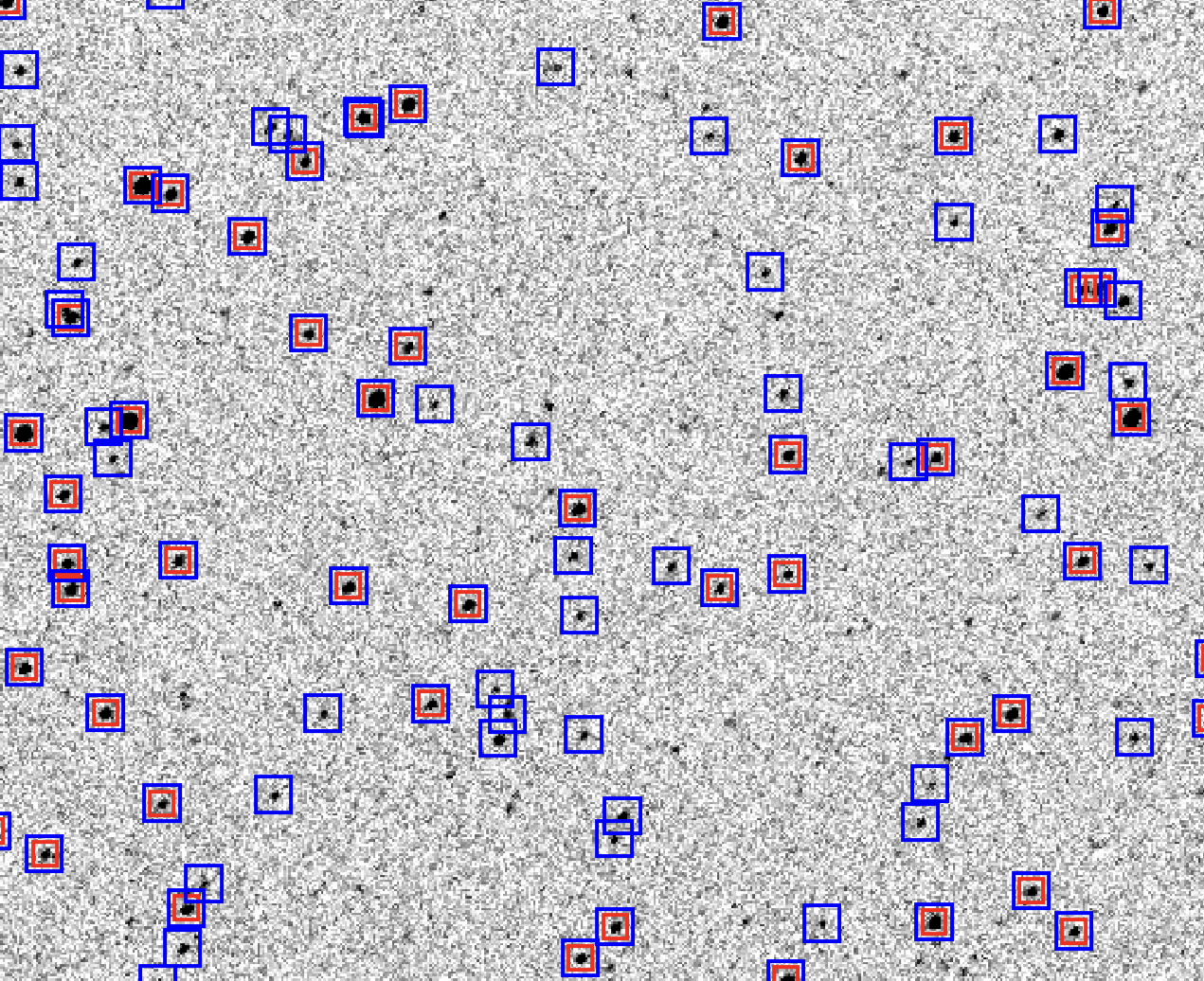

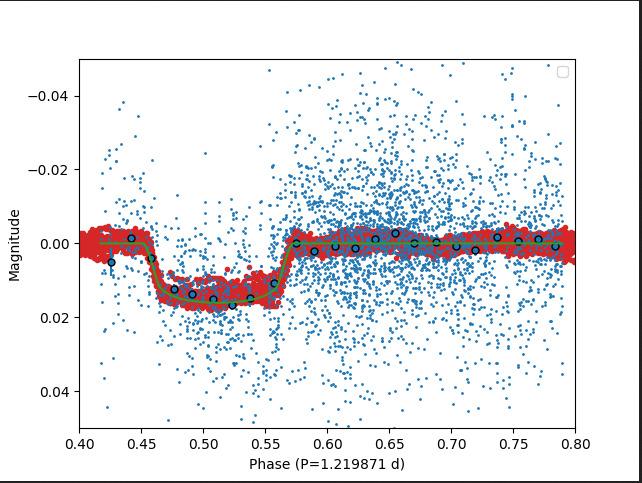

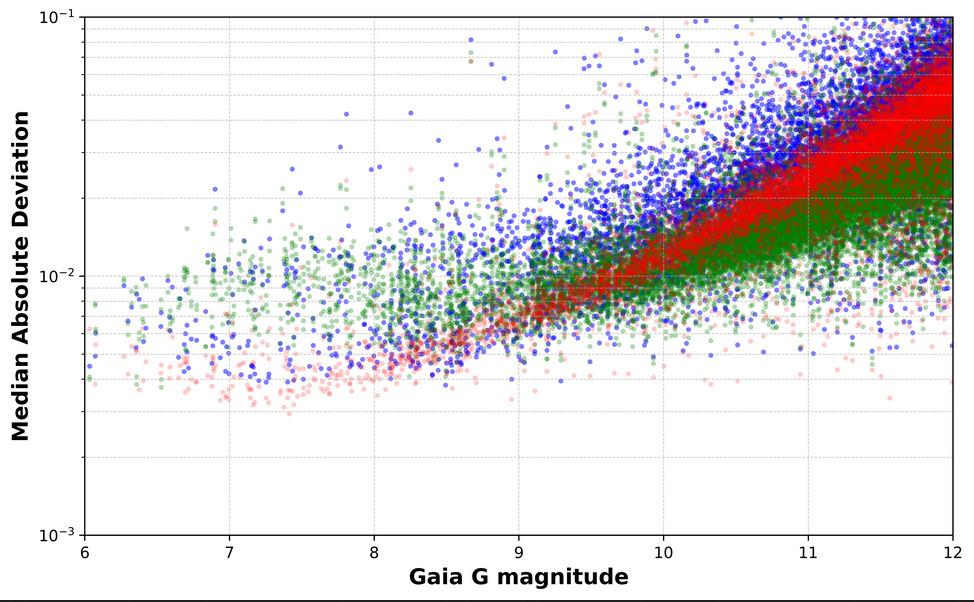

AutoWISP

Kaloyan Penev, Angel Romero and S. Javad Jafarzadeh have developed a software pipeline, AutoWISP, for extracting high-precision photometry from citizen scientists' observations made with consumer-grade color digital cameras (digital single-lens reflex, or DSLR, cameras), based on their previously developed tool, AstroWISP. The new pipeline is designed to convert these observations, including color images, into high-precision light curves of stars.

"We outline the individual steps of the pipeline and present a case study using a Sony-alpha 7R II DSLR camera, demonstrating sub-percent photometric precision, and highlighting the benefits of three-color photometry of stars. Project PANOPTES will adopt this photometric pipeline and, we hope, be used by citizen scientists worldwide. Our aim is for AutoWISP to pave the way for potentially transformative contributions from citizen scientists with access to observing equipment."

Code site:

+ AutoWISP

https://github.com/kpenev/AutoWISP

+ Documentation:

https://kpenev.github.io/AutoWISP/

+ AstroWISP

https://github.com/kpenev/AstroWISP

https://pypi.org/project/astrowisp/

+ Documentation

https://kpenev.github.io/AstroWISP/

#space #code #python #astrophotography #photography #science #astronomy

Thanks to Sam Van Kooten

https://github.com/svank

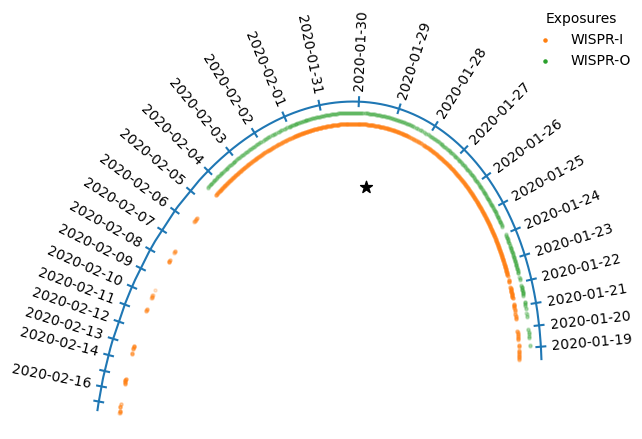

wispr-analysis

Shared tools for WISPR data analysis

Some highlights

plot_utils.py

+ plot_WISPR:

Aims to be a versatile function that does the Right Thing for plotting WISPR images, with colorbar bounds that are adjusted for inner and outer FOV and for L2 or L3 images, a square-root-scaled colorbar, and WCS coordinate support

+ *_axis_dates: Helper util for labeling a temporal axis with dates.

+ plot_orbit:

Reads a directory (or nested set of directories) of WISPR files and plots a diagram showing the orbital path of PSP and the locations where images were taken, like this:

projections.py

+ reproject_to_radial: Proof-of-concept code for reprojecting data into a radial coordinate system (where each row of the output array is a radial line out from the Sun.

data_cleaning.py

+ dust_streak_filter: Code for identifying debris streaks in the WISPR images

+ clean_fits_files: Function to batch-run dust_streak_filter on a directory of images.

composites.py

+ gen_composite: Reprojects an inner- and outer-FOV image into a common coordinate system

utils.py

+ to_timestamp: Parse a timestamp from a handful of formats, including the timestamps inside WISPR headers, or entire WISPR filenames. Returns a numerical timestamp.

+ collect_files: Walks a directory of WISPR files (or a directory of subdirectories of WISPR images), identifies all the WISPR images, sorts them, and separates them by inner and outer FOVs.

+ ignore_fits_warnings: Suppresses the warnings Astropy raises when reading WISPR FITS files or parsing WCS data.

https://github.com/svank/wispr_analysis

Documentation:

https://svank.github.io/wispr_analysis/

#space #code #python #science #astronomy #astrophysics #tech #NASA

2025-08-20

Leandro Beraldo e Silva released four days ago:

lberaldoesilva/tropygal version 0.1.4

Entropy estimates and distribution functions for galactic dynamics

tropygal is a pure-python package for entropy estimates in the context of galactic dynamics, but can be used in other contexts too. It also provides functions for analytical distribution functions and density of states for models that have analytical expressions.

** Acknowledgements

Development of tropygal was supported by the following research grants:

+ NASA ATP awards 80NSSC20K0509 and 80NSSC24K0938

+ U.S. NSF AAG grant AST-2009122

+ STFC Ernest Rutherford fellowship (ST/X004066/1)

+ JSPS KAKENHI Grant Numbers JP24K07101, JP21K13965, and JP21H00053

+ CNPq (309723/2020-5)

+ Heising Simons Foundation grant # 2022-3927

** Funding agencies:

+ NASA ATP - NASA Astrophysical Theory Program (US)

+ NSF - National Science Foundation (US)

+ STFC - Science and Technology Facilities Council (UK)

+ JSPS - Japan Society for the Promotion of Science (Japan)

+ CNPq – Conselho Nacional de Desenvolvimento Científico e Tecnológico (Brasil)

+ Heising Simons Foundation (US)

https://github.com/lberaldoesilva/tropygal

https://tropygal.readthedocs.io/en/latest/

https://link.springer.com/epdf/10.1007/BF00773669?sharing_token=c10vjrwKcPemuJugFt8GPPe4RwlQNchNByi7wbcMAY4It9IZvz0cQPRbyx9xcOC67oJ1uYrQB3lur75MCDwqdPXd8sWtzve-hL8JYGekYHqhITNJ3f12mXyjbTmMIqid10HwkWoYRAWdltK0BTwIew%3D%3D

https://www.mdpi.com/1099-4300/18/1/13

#space #code #python #science #astronomy #astrophysics #tech #NASA

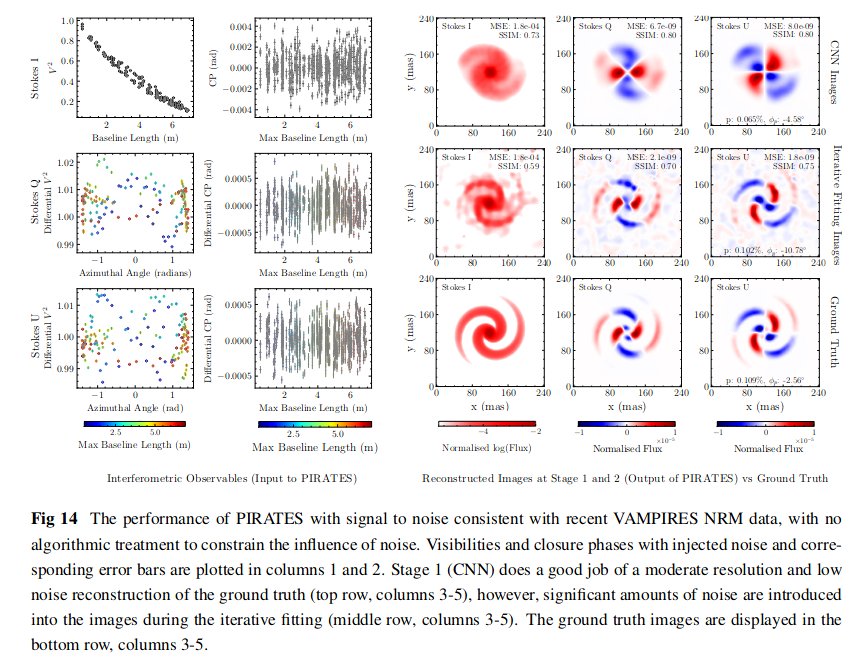

PIRATES

(Polarimetric Image Reconstruction AI for Tracing Evolved Structures)

uses machine learning to perform image reconstruction.

It uses MCFOST to generate models, then uses those models to build, train, iteratively fit, and evaluate PIRATES performance.

Optical interferometric image reconstruction is a challenging, ill-posed optimization problem which usually relies on heavy regularization for convergence. Conventional algorithms regularize in the pixel domain, without cognizance of spatial relationships or physical realism, with limited utility when this information is needed to reconstruct images. Here we present PIRATES (Polarimetric Image Reconstruction AI for Tracing Evolved Structures), the first image reconstruction algorithm for optical polarimetric interferometry. PIRATES has a dual structure optimized for parsimonious reconstruction of high fidelity polarized images and accurate reproduction of interferometric observables. The first stage, a convolutional neural network (CNN), learns a physically meaningful prior of self-consistent polarized scattering relationships from radiative transfer images. The second stage, an iterative fitting mechanism, uses the CNN as a prior for subsequent refinement of the images with respect to their polarized interferometric observables. Unlike the pixel-wise adjustments of traditional image reconstruction codes, PIRATES reconstructs images in a latent feature space, imparting a structurally derived implicit regularization.

https://github.com/LucindaLilley/PIRATES

https://ui.adsabs.harvard.edu/abs/2025arXiv250511950L/abstract

https://arxiv.org/pdf/2505.11950

CREDITS:

Lilley, Lucinda ; Norris, Barnaby ; Tuthill, Peter ; Spalding, Eckhart ; Lucas, Miles ; Zhang, Manxuan ; Millar-Blanchaer, Maxwell ; Pinte, Christophe ; Bottom, Michael ; Guyon, Olivier ; Lozi, Julien ; Deo, Vincent ; Vievard, Sébastien ; Wong, Alison P. ; Ahn, Kyohoon ; Ashcraft, Jaren

#space #code #python #science #astronomy #astrophysics #tech

Be Simple - Don’t Be Clever - Code Rust

🦀 https://corrode.dev/blog/simple/

#rust #coding #simple #clever #rustlang #programming #code #clevercode #blog #codetips

"Remember that there is a distinction between a programming language and a graphical user interface. Don't confuse snazzy graphics (generated using someone else's libraries and tools) with good programming."~ Bjarne Stroustrup (C++ Inventor)

@infostorm@a.gup.pe @hacking@a.gup.pe @c@a.gup.pe @programming@a.gup.pe @dev@a.gup.pe @quotes@a.gup.pe

#BjarneStroustrup #C #cplusplus #Hacking #Hackers #Programming #Programmers #Dev #Developers #Code #Coding #quotes #quotations

![Optical interferometric image reconstruction is a challenging, ill-posed optimization problem which usually relies on heavy regularization for convergence. Conventional algorithms regularize in the pixel domain, without cognizance of spatial relationships or physical realism, with limited utility when this information is needed to reconstruct images. Here we present PIRATES (Polarimetric Image Reconstruction AI for Tracing Evolved Structures), the first image reconstruction algorithm for optical polarimetric interferometry. PIRATES has a dual structure optimized for parsimonious reconstruction of high fidelity polarized images and accurate reproduction of interferometric observables. The first stage, a convolutional neural network (CNN), learns a physically meaningful prior of self-consistent polarized scattering relationships from radiative transfer images. The second stage, an iterative fitting mechanism, uses the CNN as a prior for subsequent refinement of the images with respect to their polarized interferometric observables. Unlike the pixel-wise adjustments of traditional image reconstruction codes, PIRATES reconstructs images in a latent feature space, imparting a structurally derived implicit regularization. We demonstrate that PIRATES can reconstruct high fidelity polarized images of a broad range of complex circumstellar environments, in a physically meaningful and internally consistent manner, and that latent space regularization can effectively [..] Optical interferometric image reconstruction is a challenging, ill-posed optimization problem which usually relies on heavy regularization for convergence. Conventional algorithms regularize in the pixel domain, without cognizance of spatial relationships or physical realism, with limited utility when this information is needed to reconstruct images. Here we present PIRATES (Polarimetric Image Reconstruction AI for Tracing Evolved Structures), the first image reconstruction algorithm for optical polarimetric interferometry. PIRATES has a dual structure optimized for parsimonious reconstruction of high fidelity polarized images and accurate reproduction of interferometric observables. The first stage, a convolutional neural network (CNN), learns a physically meaningful prior of self-consistent polarized scattering relationships from radiative transfer images. The second stage, an iterative fitting mechanism, uses the CNN as a prior for subsequent refinement of the images with respect to their polarized interferometric observables. Unlike the pixel-wise adjustments of traditional image reconstruction codes, PIRATES reconstructs images in a latent feature space, imparting a structurally derived implicit regularization. We demonstrate that PIRATES can reconstruct high fidelity polarized images of a broad range of complex circumstellar environments, in a physically meaningful and internally consistent manner, and that latent space regularization can effectively [..]](https://files.defcon.social/dcsocial-s3/media_attachments/files/115/086/046/124/240/174/original/06ed149b287f93a9.png)

![[?]](https://soc.octade.net/octade/s/3f7f2b5eda4e392867c301c49c2daa16.png)